|

I am postdoctoral researcher in the Image and Video Computing Group at Boston University. I received my PhD in Computer Science at Boston University advised by Professor Kate Saenko. My research is focused on robust and semantically-consistent image generation and editing. I am also interested in label-efficient learning, as well as applications of Computer Vision for Good. Prior to joining BU, I received my BSc and MSc in Computer Science at Kazan Federal University. dbash [at] bu [dot] edu / CV / short CV / Google Scholar |

|

|

My primary interests include generative models, image translation and label-efficient learning. My goal is to make generative models more expressive and controllable for artists of various skill levels. With this goal, I explore various ways to achieve fine-grained semantic control with label-efficient disentanglement and domain alignment. In addition to my work on generative models, I am also deeply passionate about the applications of AI for Environment. |

|

Dina Bashkirova, Arijit Ray, Rupayan Mallick, Sarah Adel Bargal, Jianming Zhang, Ranjay Krishna Kate Saenko in submission 2023 project page We propose Lasagna, a layered image editing approach that allows controlled and language-guided object relighting. Lasagna achieves a controlled relighting via layered score distillation sampling that allows extracting diffusion model prior about lighting without changing other crucial aspects of the input image. |

|

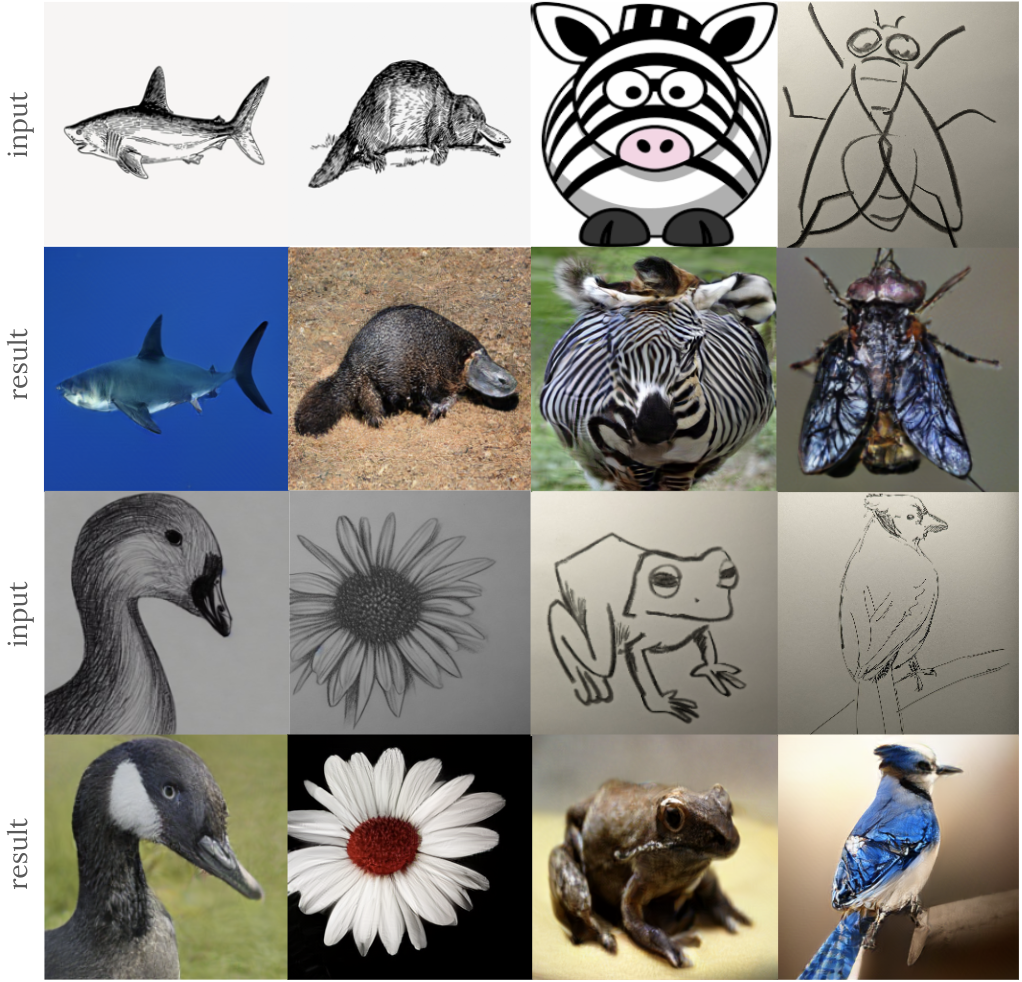

Dina Bashkirova, Jose Lezama, Kihyuk Sohn, Kate Saenko, Irfan Essa CVPR (highlight) 2023 project page In this paper, we introduce MaskSketch, an image generation method that allows spatial conditioning of the generation result using a guiding sketch as an extra conditioning signal during sampling. Given an input sketch and its class label, MaskSketch samples realistic images that follow the given structure. MaskSketech works on sketches of various degrees of abstraction by leveraging a pre-trained masked image generators, while not requiring model finetuning or pairwise supervision. |

|

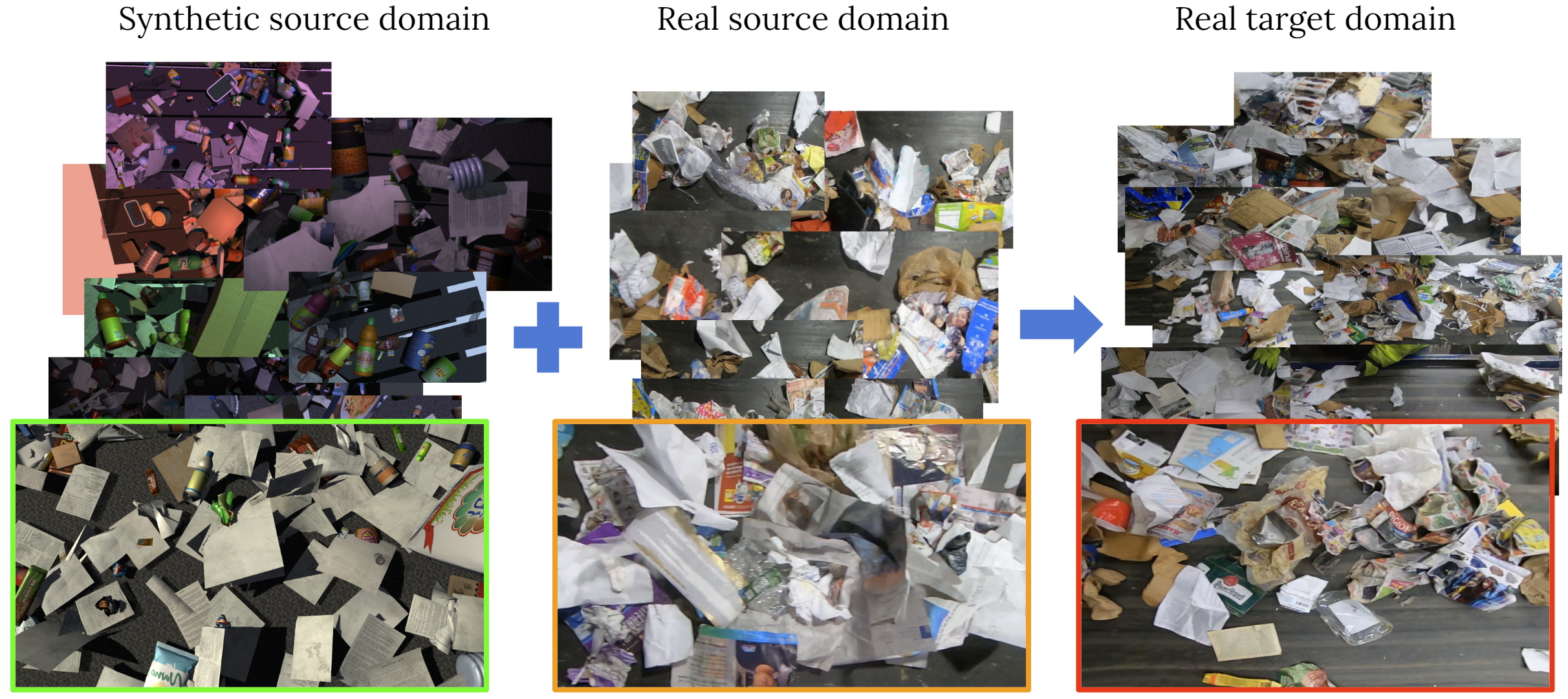

Dina Bashkirova, Samarth Mishra, Diala Lteif, Piotr Teterwak, Donghyun Kim, James Akl, Fadi Alladkani, Berk Calli, Sarah Adel Bargal, Kate Saenko NeurIPS 2022 challenge page We introduce a visual domain adaptation challenge for industrial waste sorting. Large variability in visual appearance and composition of waste stream make generalization especially challenging for the automated waste sorting solutions. In this competition, we propose to improve generalization of the waste detection models using SynthWaste, novel synthetic dataset for waste sorting. |

|

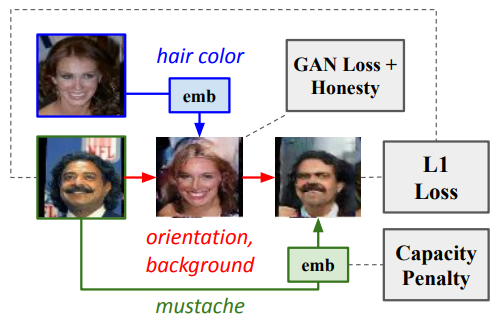

Ben Usman*, Dina Bashkirova*, Kate Saenko WACV 2022 arxiv / bib We propose a new many-to-many image translation method that infers which attributes are domain-specific from data by constraining information flow through the network using translation honesty losses and a penalty on the capacity of the domain-specific embedding, and does not rely on hard-coded inductive architectural biases. |

|

Dina Bashkirova, Mohamed Abdelfattah, Ziliang Zhu, James Akl, Fadi Alladkani, Ping Hu, Vitaly Ablavsky, Berk Calli, Sarah Adel Bargal Kate Saenko CVPR 2022 arxiv / project page / bib / code We present the first in-the-wild object segmentation dataset for industrial waste sorting for fully-, semi- and weakly-supervised setups. Our ZeroWaste datasets presents a challenging computer vision task of semantic segmentation of extremely cluttered scenes with highly deformable and translucent objects. |

|

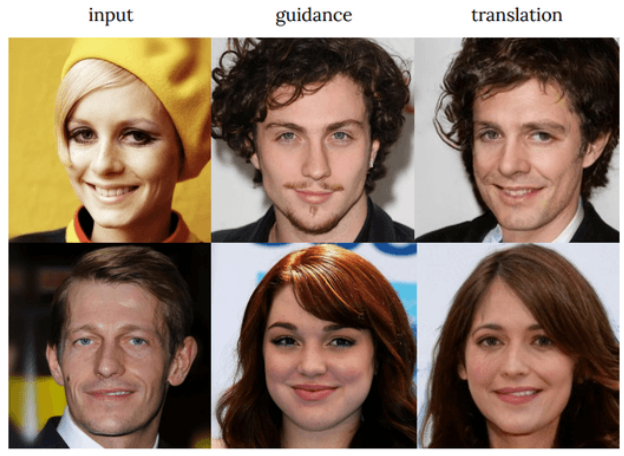

Dina Bashkirova, Ben Usman, Kate Saenko WACV 2022 arxiv / github / bib We propose an evaluation protocol for the disentanglement quality of unsupervised many-to-many image translation (UMI2I) methods. We show that modern UMI2I methods fail to correctly disentangle the domain-specific from shared factors and mostly rely on their corresponding inductive biases to determine which factors should be changed after translation. |

|

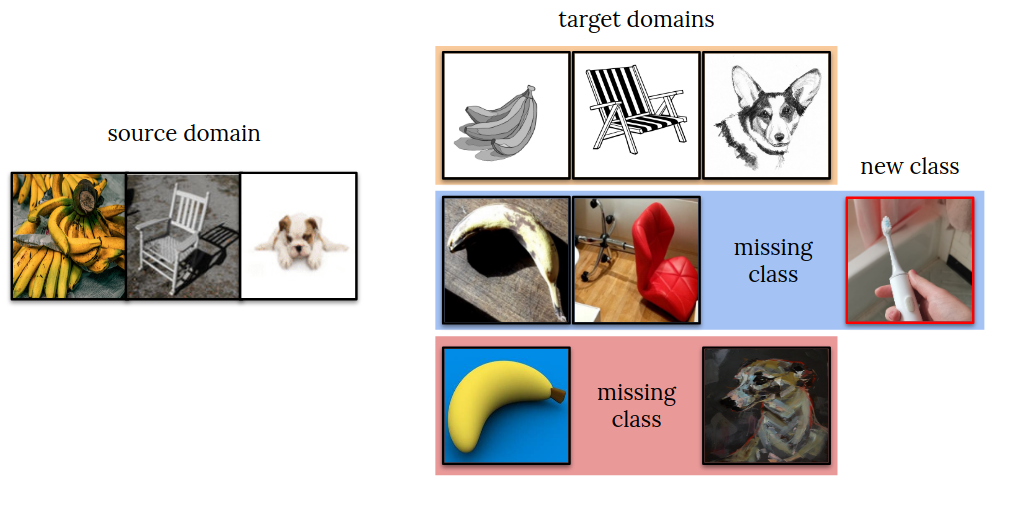

Dina Bashkirova*(equal contribution), Dan Hendrycks*, Donghyun Kim*, Samarth Mishra*, Kate Saenko*, Kuniaki Saito*, Piotr Teterwak*, Ben Usman* NeurIPS 2021 challenge page The fifth iteration of the Visual Domain Adaptation (VisDA) Challenge in which we target the universal domain adaptation problem. |

|

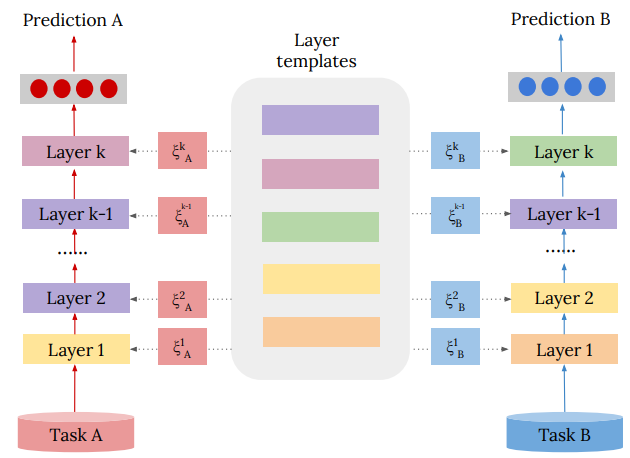

Andrey Zhmoginov, Mark Sandler, Dina Bashkirova. arXiv 2020 arxiv / github / bib We propose a novel modular model architecture that allows parameter sharing and reuse for various computer vision tasks, such as multi-task learning, knowledge transfer and domain adaptation. |

|

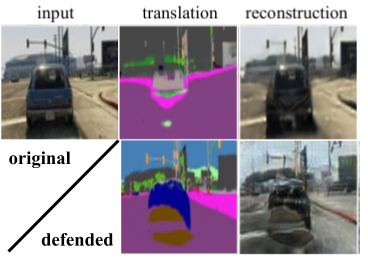

Dina Bashkirova, Ben Usman, Kate Saenko NeurIPS 2019 arxiv / github / project page / poster / proceedings / bib We show that cycle-consistent models perform a self-adversarial attack by embedding low-amplitude structured noise into intermediate generated images to reconstruct input images perfectly. We propose two techniques that prevent this kind of "cheating" and show that defending against such self-adversarial attacks improves the translation quality. |

|

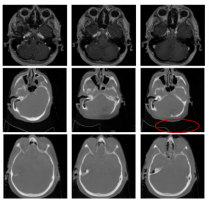

Dina Bashkirova, Ben Usman, Kate Saenko arXiv 2018 arxiv / github / volumetric data / bib We propose a spatiotemporal extention of CycleGAN and show when it performs better then per-frame translation on two novel unsupervised video-to-video translation benchmarks including a novel CT-to-MRI volumetric medical domain. |

|

Dina Bashkirova, Shin Yoshizawa, Roustam Latypov, Hideo Yokota Proceedings of ISP RAS 2017 arxiv / bib We propose a novel approximation method for fast Gaussian convolution of two-dimensional uniform point sets that involves L1 distance metric for Gaussian function and domain splitting approach to achieve fast computation. |

|

Grader for CS480 Introduction to Computer Graphics in 2018, CS542 Machine Learning in 2020 and 2021. Reviewer for NeurIPS 2018-2021, ICCV 2021, ICLR 2020, 2021, 2023, CVPR 2020, 2023, WACV 2020, 2021, NeurIPS DistShift Workshop 2021. Co-organized the VisDA challenge at NeurIPS21 and NeurIPS22. |

|

I enjoy reading, singing and playing music with my friends, drawing and handcrafting, weightlifting, playing boardgames and spending time with my dogs. |

|

Template adapted from Jon Barron's homepage. |